You cannot safely “set and forget” prebuilt container images, even when they come from official registries.

Most real-world images contain outdated vulnerabilities, embedded secrets, and opaque dependency chains. Trust in container images must be engineered and continuously verified. It cannot be inherited from a registry badge or an “official” label.

The Hidden Trust Assumptions Behind Prebuilt Images

When you run docker pull against a popular registry, you are implicitly trusting several things:

- The publisher’s build pipeline has not been compromised

- The dependencies included in the image are safe and current

- The tag you pulled will not silently change tomorrow

A registry guarantees content distribution, not build integrity.

Large-scale analyses consistently demonstrate how fragile these assumptions are. A large-scale academic study analyzing 33,952 Docker Hub images found that 93.7% contained known vulnerabilities, thousands contained leaked secrets, and dozens were outright malicious.

If your strategy is “pull the official image and scan it,” you are relying on assumptions that do not match how container ecosystems actually work.

Why Official Registries Are Treated as Trusted Sources

Teams treat Docker Hub, GitHub Container Registry, and major cloud registries as de facto trust anchors for predictable reasons:

- Brand recognition of publishers such as Nginx, Redis, or Ubuntu

- Convenience compared to building from scratch

- The perception that “official” implies continuous maintenance

However, empirical data shows that many high-pull images contain hundreds of vulnerabilities and hundreds of components, many of them years old.

“Official” means maintained by a known publisher. It does not mean minimal, hardened, reproducible, or supply-chain verified.

This is exactly the problem we are designed to address. Instead of assuming “official equals safe,” we rebuild and curate base images in a controlled environment, reduce inherited CVEs, generate verifiable SBOMs, and enforce attestation and signing from the start.

What Registries Actually Guarantee (and What They Don’t)

Registries are excellent distribution systems. They guarantee:

- Availability of images and layers

- Basic authentication and access control

- Optional vulnerability scanning badges

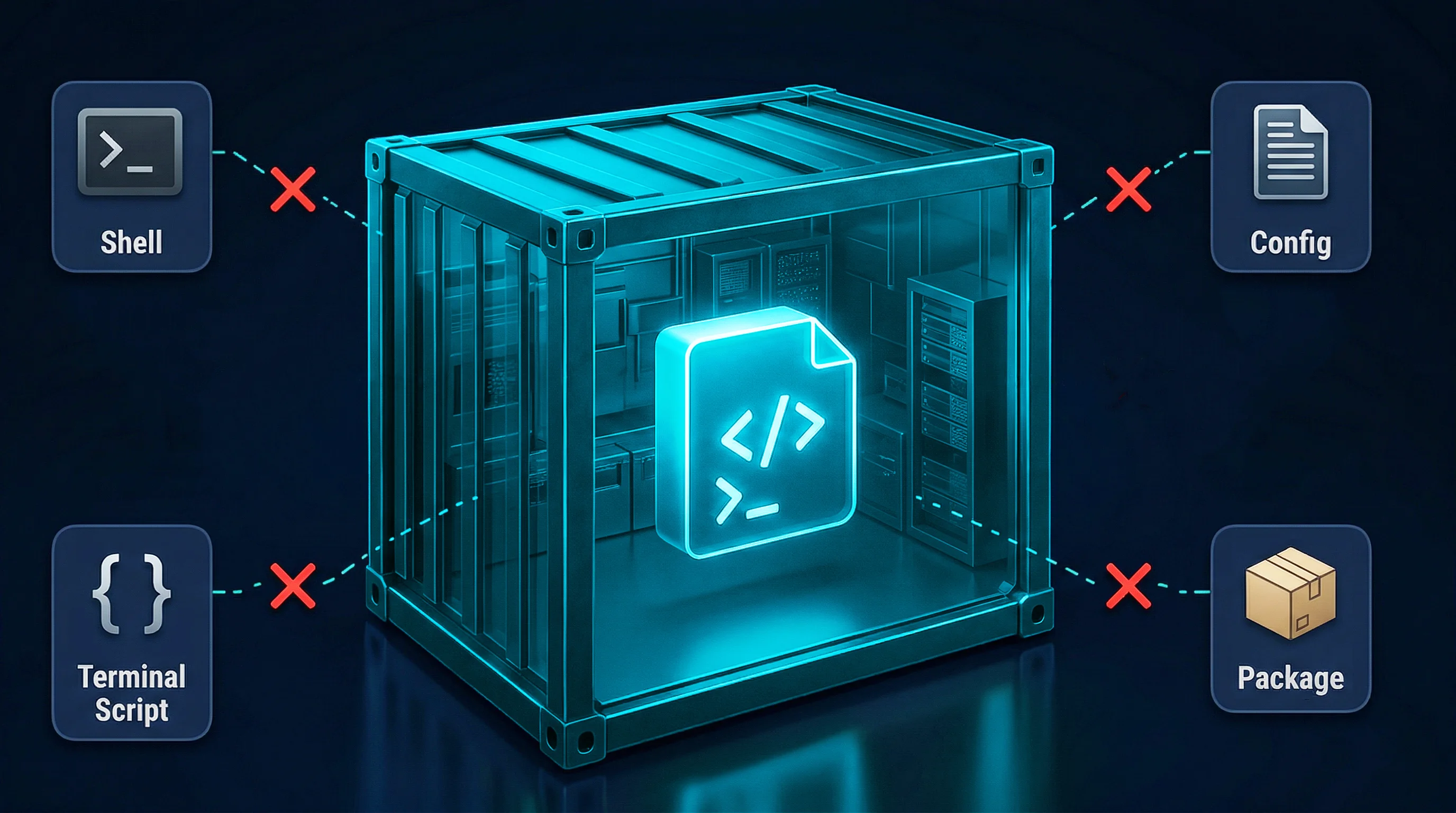

They do not guarantee:

- That the image was built from the source you expect

- That the build pipeline was secure

- That tags are immutable

- That upstream dependencies were not compromised

Content integrity does not imply build integrity.

.svg)

Research from Trend Micro analyzing exposed container registries identified hundreds of publicly accessible repositories containing thousands of images and terabytes of downloadable data without authentication.

This is why we operate on a simple principle: security must begin at the source. We control who can publish, how images are built, and what verification conditions must be met before release.

OCI Mechanics: Tags vs Digests (and Why It Matters)

The Open Container Initiative (OCI) image specification defines tags as mutable references and digests as content-addressable identifiers.

- Tags like nginx:latest are mutable labels.

- Digests like nginx@sha256:abc123… are immutable references to a specific manifest and its layers.

Most workflows rely on tags. That is a structural weakness.

If a tag is overwritten after a scan, the artifact deployed later may not be the artifact that was approved. Digest pinning prevents silent drift, but it only guarantees content integrity. It does not guarantee that the artifact was built securely.

Integrity verifies what you have.

Provenance verifies how it was produced.

Integrity vs Provenance: The Trust Spectrum

Container trust has two distinct components:

Integrity

Cryptographic verification that the image has not been modified since signing. This confirms the artifact has not changed.

Provenance

Attested metadata describing the source repository, build inputs, environment, and pipeline that produced the image. This confirms it was built correctly.

You can have a perfectly signed, cryptographically intact image that was built in a compromised CI environment. That image has integrity but lacks trustworthy provenance.

We enforce both. Every base image we ship is signed, accompanied by a complete SBOM, and built within a controlled, verifiable pipeline aligned with modern supply chain standards.

High-assurance supply chain security requires enforcement of both at build time and at deploy time.

Rebuild Variance and Non-Deterministic Container Images

Even with the original Dockerfile, rebuilding an “official” image rarely produces a bit-for-bit identical artifact.

Common causes include:

- Non-pinned package installs such as apt-get install python3

- Timestamps and build IDs embedded in binaries

- Pulling live upstream packages during build

Most container builds are non-hermetic. They rely on external repositories at build time. That means the resulting artifact is partially defined by whatever those repositories served at that moment.

Two builds of the same Dockerfile, hours apart, can produce different artifacts.

If a build cannot be deterministically reproduced, its provenance cannot be independently verified.

This is precisely why frameworks like Google’s Supply-chain Levels for Software Artifacts (SLSA) emphasize hermetic builds, declared dependencies, and verifiable provenance. Reproducible Builds initiatives similarly argue that independent parties must be able to recreate identical artifacts from source to establish trust in the software supply chain.

These frameworks exist because without hermetic, reproducible builds, artifact integrity and provenance cannot be independently verified across organizations.

We mitigate this by rebuilding base images in controlled environments, minimizing external variance, generating SBOMs at build time, and maintaining a continuously verified image set.

Threat Model: A Realistic Supply Chain Attack

Consider a simple scenario:

An attacker compromises a maintainer’s account or CI token.

They inject a malicious dependency during the build process.

The pipeline signs and publishes the container image to an official registry.

Downstream users pull the image by digest.

The SHA256 hash verifies integrity.

The signature verifies the publisher’s key.

What neither mechanism verifies is whether the build process itself was trustworthy.

Without provenance enforcement and admission control, malicious artifacts can move through the pipeline undetected.

Dependency Aggregation and Upstream Risk

Container images aggregate operating system packages, language libraries, build tools, and utilities from multiple upstream ecosystems.

Analysis of high-pull Docker Hub images by NetRise found an average of nearly 400 software components per container, with hundreds of inherited vulnerabilities.

Each additional component increases inherited risk and attack surface.

This is why we follow a “Bigger Images, Bigger Risk” philosophy. We deliberately reduce attack surface, remove unnecessary packages, and start from minimal, hardened foundations before application code is ever added.

Why Scanning Prebuilt Images Is Not Enough

Static image scanning is necessary but insufficient.

Scanning:

- Detects known CVEs

- Evaluates misconfigurations when configured correctly

Scanning does not:

- Detect zero-day vulnerabilities

- Prove build pipeline integrity

- Detect dependency substitution during build

- Guarantee the scanned artifact is the deployed artifact if tags are mutable

Scanning inspects content. It does not verify process integrity.

Industry threat reports from the Cloud Native Computing Foundation (CNCF) and other cloud-native security research consistently show that misconfigurations, vulnerable base images, and supply chain weaknesses remain leading causes of container-related incidents. The persistent issue is not the absence of scanning, but misplaced confidence in what scanning alone can prove.

Our approach is to embed security into the base image itself, then make scanning more effective by starting from a cleaner foundation with accurate, build-time SBOMs and reduced inherited noise.

What Verifiable Container Image Trust Actually Requires

To truly trust a container image, you must answer three questions:

1. What is inside the image?

- Complete SBOM with packages and versions

- Clear CVE mapping and prioritization

2. Who built it and how?

- Provenance attestations describing build inputs and environment

- Cryptographic signing of artifacts and metadata

3. Has it been altered?

- Digest verification

- Signature enforcement at deploy time

- Registry immutability controls

In practice, this means adopting SLSA-aligned build pipelines, using signing frameworks like Sigstore or Cosign, and enforcing admission policies that reject unsigned or unverified artifacts.

Our platform is built around this model. We maintain hardened base images, generate build-time Software Bills of Materials (SBOM), enforce signing and verification, and align with modern supply chain integrity standards so trust is established before your application code is introduced.

Conclusion: Trust Must Be Built, Not Inherited

The data is consistent:

- The majority of popular images contain known vulnerabilities

- Many contain excessive components and legacy packages

- Secrets and misconfigurations are widespread

This does not mean prebuilt images are unusable. It means registry reputation is not a security model.

Real trust in container images requires:

- Transparent contents

- Deterministic and verifiable builds

- Cryptographic integrity enforcement

- Strong provenance guarantees

- Continuous attack surface reduction

We believe container trust must be engineered at the foundation. By standardizing on verified, hardened base images and layering your own SLSA-aligned CI/CD, signing, and policy enforcement on top, you move from assumed trust to cryptographically enforceable trust.

FAQs

1. Why isn’t pulling official images and scanning them enough?

Scanning detects known CVEs but does not verify provenance, tag stability, or build integrity. We provide curated, signed, provenance-backed base images so trust is embedded from the start.

2. How do we reduce inherited container vulnerabilities?

We maintain CVE-reduced, hardened base images and generate precise SBOMs so you inherit fewer vulnerabilities and can focus remediation efforts where they matter.

3. How do we improve Software Composition Analysis accuracy?

By generating high-quality SBOMs during build time, we provide accurate component visibility that reduces false positives and improves compliance reporting.

4. How do we help with attack surface reduction?

We deliberately minimize included packages and remove unnecessary components, ensuring you start from a smaller, hardened foundation.

5. Why not build everything in-house?

Rebuilding, verifying, signing, and continuously maintaining secure, reproducible base images requires specialized supply chain expertise. We provide that foundation so your teams can focus on delivering features while retaining provable trust in what they deploy.

.svg)

.png)

.png)

.webp)

.webp)