Container security often starts after the problem is already in the image.

In most workflows, images are built first and secured later through scanning and patching. While this approach helps reduce known vulnerabilities, it rarely addresses the root cause. Teams end up in a cycle of fixing, rebuilding, and rescanning, with each iteration bringing only incremental improvement.

Over time, it becomes clear that the issue is not just vulnerabilities in the application, but the composition of the container itself. Most container security approaches optimize for detecting vulnerabilities after an image is built. A more effective approach is to eliminate the conditions that introduce those vulnerabilities in the first place.

The Hidden Weight Inside Containers

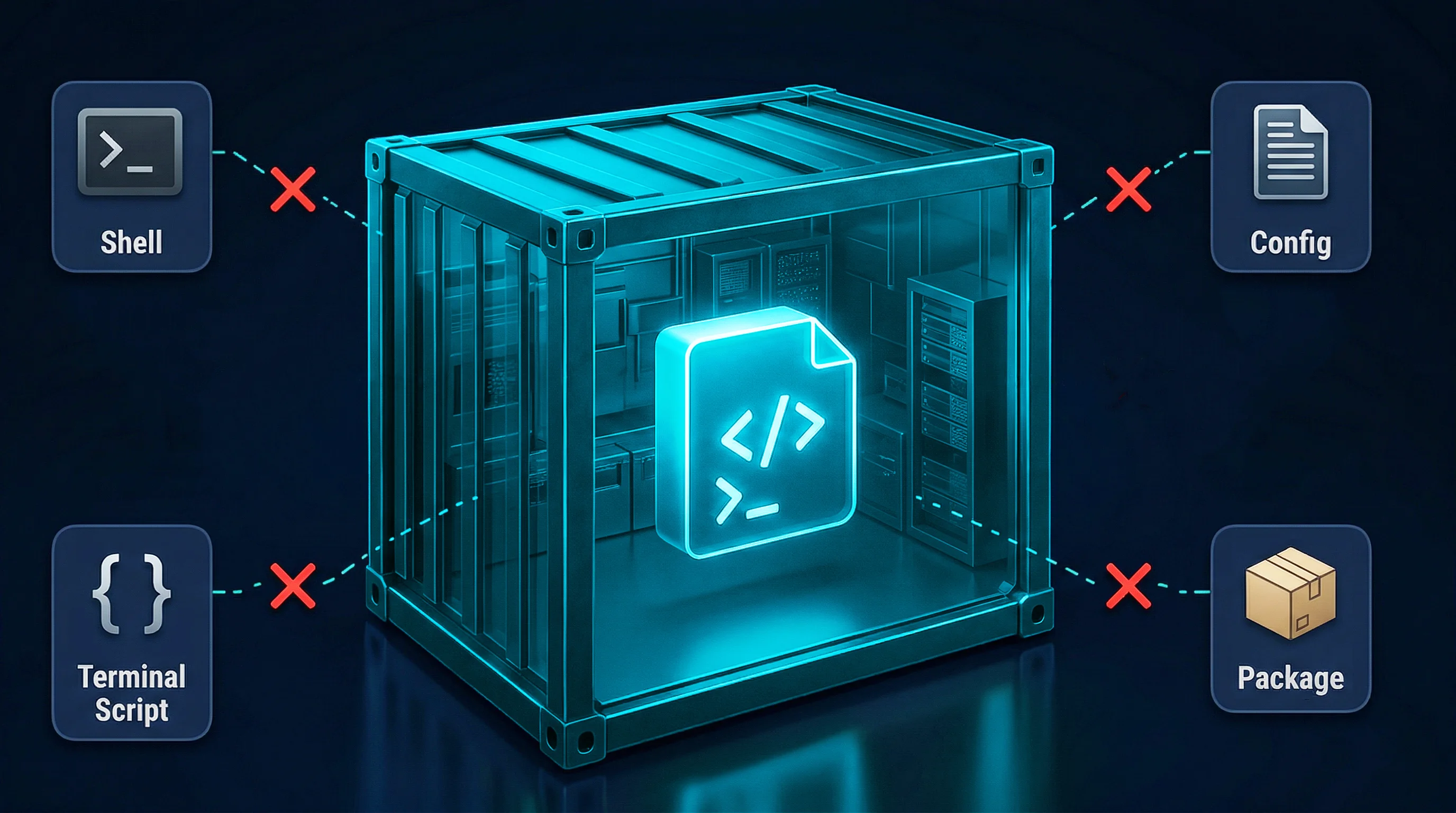

Modern container images often include far more than what is strictly required to run an application. Base images are designed for flexibility and convenience, so they come bundled with shells, package managers, debugging tools, and a wide range of system utilities. These are incredibly useful during development, but once the application is deployed, they quietly become liabilities.

Each additional component increases the attack surface. Even if a tool is never used in production, its presence alone can introduce vulnerabilities, trigger alerts in scanners, and provide attackers with capabilities they should never have had in the first place.

What initially looks like a vulnerability problem is often a composition problem: the container simply contains too much.

The Limits of Conventional Hardening

To address this, teams typically adopt one of two strategies.

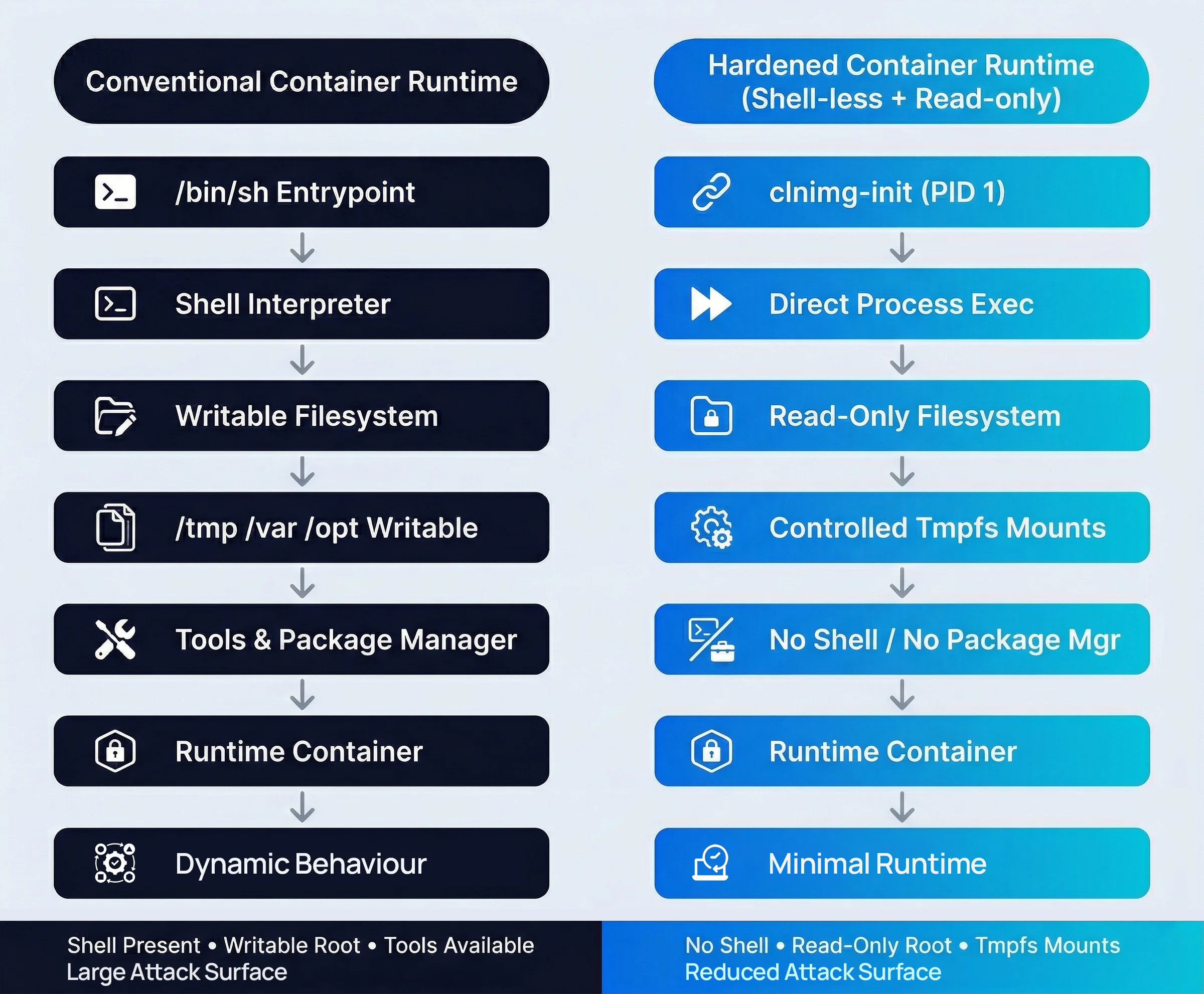

The first is to remove the shell and other debugging tools. This reduces the ability to execute arbitrary commands inside the container, which is a meaningful improvement. However, the filesystem often remains writable. If an attacker finds a way to write files through the application itself, they may still be able to execute malicious code using the application runtime.

The second approach is to enforce a read-only filesystem. This prevents modification of binaries and configuration files, ensuring that the container remains consistent with the image. But in this case, the container often still includes a shell and networking utilities. These can be used for lateral movement or data exfiltration if the container is compromised.

Each approach strengthens security in isolation, but neither achieves a truly minimal runtime environment. And combining them turns out to be more difficult than it seems.

The Dependency Paradox

The challenge lies in what we might call a dependency paradox.

Many applications implicitly rely on behaviors typically provided by a shell or a writable filesystem. Environment variables are expanded, signals are handled, temporary files are created, and runtime state is stored in expected locations. These behaviors are so common that they often go unnoticed—until they are removed.

When the shell is eliminated, startup scripts may fail or environment variables may not be processed correctly. When the filesystem is locked, applications may crash when attempting to write to default paths such as /tmp or /var/run.

As a result, most production images retain at least one of these capabilities. If the shell is removed, the filesystem is usually left writable. If the filesystem is made read-only, the shell is often kept for initialization and debugging. This creates an unavoidable tradeoff between usability and security.

.webp)

Replacing the Shell with a Hardened Init

In many containers, the first process that runs is a shell executing a startup script. This approach is flexible and convenient during development, but it introduces unnecessary complexity and risk in production environments.

To solve this problem, production images built using CleanStart replace the shell with a minimal, purpose-built init process (clnimg-init) that manages application startup and lifecycle behavior.

The clnimg-init process performs only the essential tasks required to start and manage the application. It handles responsibilities such as signal processing, environment validation, and launching the main application process—functions that are traditionally managed by shell scripts.

Because the binary is statically compiled, it does not depend on shared libraries or external components. This significantly reduces runtime dependencies and removes an entire class of potential vulnerabilities.

Once the application starts, there is no shell or interpreter left inside the container. All lifecycle management is handled internally in a controlled and predictable way.

In effect, CleanStart resolves the dependency on shell behavior by replacing it with a minimal, hardened init layer, allowing the container to operate without the risks associated with a general-purpose shell.

Enforcing an Immutable Root Filesystem

Removing the shell reduces the number of tools available at runtime, but it does not prevent modification of the container contents. To address this, CleanStart enforces an immutable root filesystem by mounting it as read-only in production images.

With this constraint in place, binaries and configuration files cannot be altered after deployment. The running container becomes an exact reflection of the image that was built, eliminating drift and making the system easier to reason about and audit. Rather than relying on monitoring to detect changes, this approach prevents them by design.

This reduces reliance on runtime monitoring and simplifies audit and improves incident response, since the system state is guaranteed to remain unchanged.

Of course, applications still need to write data at runtime. Logs must be generated, temporary files must be created, and caches must be maintained. CleanStart does not eliminate write operations—it constrains them. The key is not to allow unrestricted writes across the filesystem, but to limit them precisely to only those paths that are required for the application to function.

Discovering What the Application Actually Needs

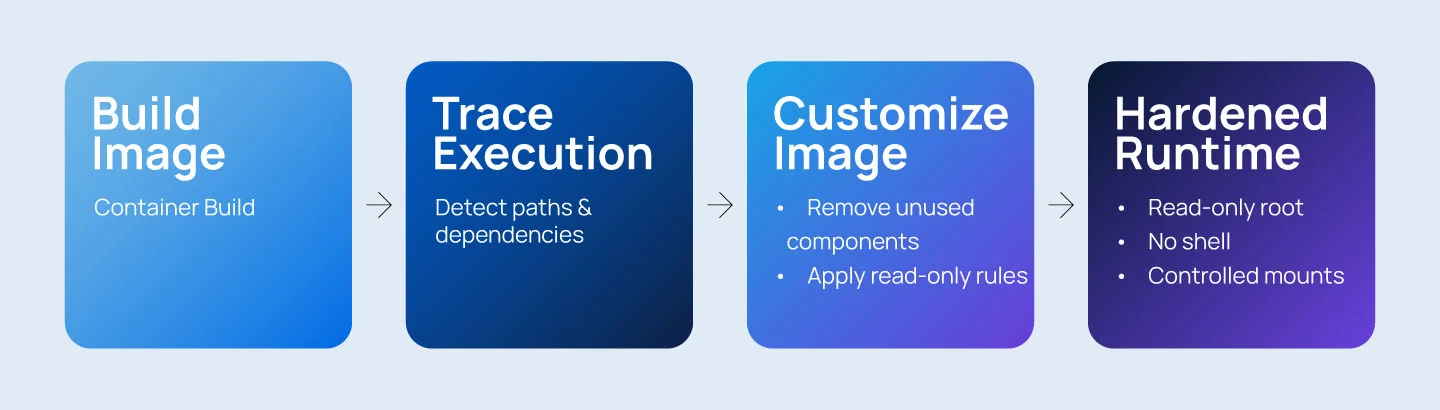

Rather than guessing which paths should remain writable, a more reliable approach is to observe the application directly.

During the build process, the application is executed in a controlled environment while file operations are monitored. Every write is recorded, producing a manifest of the minimal paths required for normal operation.

These typically include locations such as /tmp, /var/run, cache directories, and log paths.

This build-time profiling step ensures that the final configuration is based on actual behavior, not assumptions.

Controlled Writable Mounts

At runtime, the container starts with a read-only root filesystem. Only the paths identified during profiling are mounted as writable, often using memory-backed storage. This ensures that temporary data is fast, isolated, and automatically removed when the container stops.

If the application attempts to write to a protected location, the runtime configuration ensures that writes occur only within approved paths. From the application’s perspective, behavior remains unchanged. From a security perspective, the base filesystem remains untouched.

The result is a system that preserves functionality while strictly limiting where and how data can be written.

From Build to Runtime: A Consistent Pipeline

All of these steps are integrated into a build pipeline that transforms a standard container image into a hardened production artifact. The pipeline traces runtime behavior, removes unused components, enforces read-only constraints, and generates both a software bill of materials and runtime manifests.

Because this process is automated, every production image follows the same rules. Security becomes a property of the build, not a series of manual adjustments made afterward.

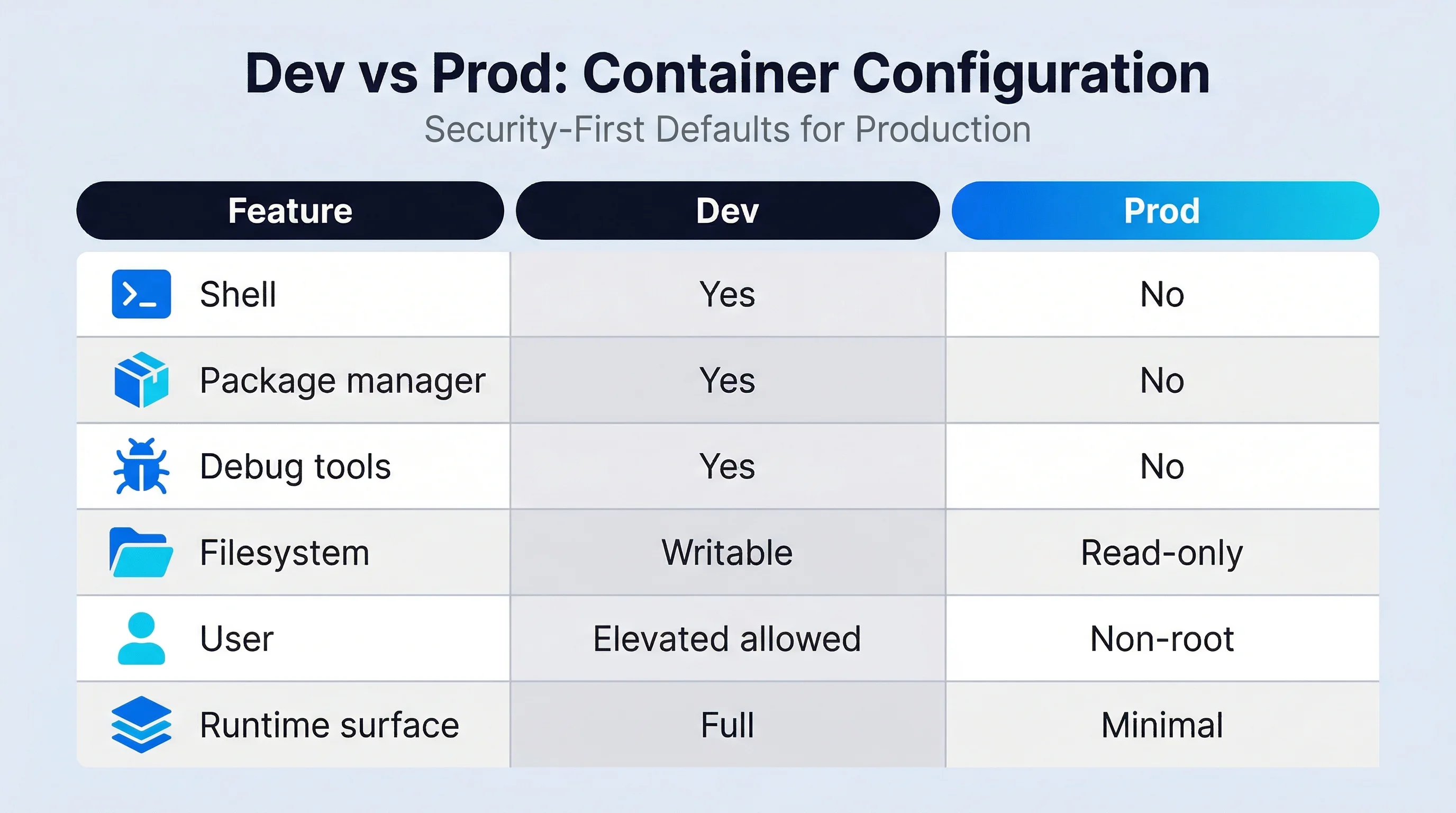

Separating Development and Production

One important aspect of this model is the separation between development and production environments.

CleanStart produces both development and production images from the same source. Development images include tools required for building and debugging, while production images are generated with a minimal runtime surface, without a shell and with a read-only root filesystem.

This ensures that application behavior remains consistent across environments while preventing unnecessary utilities and writable components from reaching production.

The difference between the two is not the application itself, but the runtime environment generated during the build.

The Operational Impact

Reducing the runtime surface has measurable effects.

Fewer components lead to fewer vulnerabilities, which in turn reduces the number of alerts generated by security scanners. Smaller images result in faster pull times and more efficient deployments. The elimination of runtime drift simplifies auditing and compliance, while the reduced complexity lowers the effort required for review and maintenance.

These benefits are not incidental; they are a direct result of controlling the container's contents during the build process.

These behaviors are not runtime features, but properties of images produced by the CleanStart build process.

A Shift in Mindset in Container Security

What emerges from this approach is a shift in how container security is defined.

Instead of building flexible environments and securing them afterward, we build minimal environments that are secure by design. Instead of reacting to vulnerabilities, we eliminate the conditions that allow them to exist.

The safest container is not the one that has been patched the most. It is the one that contains only what it needs, cannot be modified at runtime, and exposes as little surface area as possible.

If you’d like a deeper understanding of how this approach works in practice, explore our whitepaper, Shell-less and Read-Only Container Architecture.

.svg)

.png)

.webp)

.webp)

.webp)