Container Compliance Fundamentals for Kubernetes and Containerized Workloads

Compliance in Kubernetes is not a checkbox, it is an operating model. This article explains what container compliance means for containerized workloads, why it matters, which frameworks apply, how controls map to Kubernetes security domains, what technical controls are required, and which common mistakes undermine compliance.

What is container compliance for Kubernetes and containerized workloads?

Container compliance for Kubernetes and containerized workloads is the discipline of proving and continuously maintaining that your containers and Kubernetes implementation meets defined compliance requirements and compliance standards by applying measurable security controls, producing verifiable audit evidence, and reducing security risk across the full container lifecycle (build, deploy, run, and monitor).

In a Kubernetes environment, “compliance” is not a single setting. It is a control-and-evidence system that covers:

- Container images before deployment (image provenance, patch level, approved base images, and known vulnerability exposure through vulnerability scanning)

- Container runtime behavior after deployment (runtime drift, suspicious actions, and enforcement of security policies)

- Kubernetes clusters and workloads (cluster configuration hardening, workload isolation, and policy enforcement for kubernetes security)

- Access control and identity paths (who can deploy, who can modify resources, and least privilege controls enforced through Kubernetes primitives)

- Sensitive data handling (how secrets and regulated data are stored, accessed, and encrypted to meet data security requirements)

- Audit readiness (whether the system can produce a chronological record of cluster actions and security-relevant events for compliance verification)

What types of containerized workloads require compliance?

Containerized workloads require compliance when their failure, compromise, or misuse creates measurable security, legal, or operational risk. The requirement is driven by data sensitivity, business criticality, regulatory exposure, and threat impact, not by whether teams merely use Kubernetes or container platforms like Kubernetes.

The following types of containerized workloads must meet compliance expectations.

- Workloads that process regulated or sensitive data require compliance because their data handling must align with security standards and maintain a verifiable security posture.

- Internet-facing production services require compliance because direct exposure increases cloud security risk and raises the likelihood of a security incident.

- Mission-critical business workloads require compliance because failure or compromise directly impacts overall security, operations, and customer trust.

- Multi-tenant or shared Kubernetes clusters require compliance because shared infrastructure increases blast radius, requiring stronger container and Kubernetes security controls to maintain isolation.

- Workloads bound by customer or contractual security requirements require compliance because you must meet compliance obligations and demonstrate adherence to security and compliance expectations.

- Privileged workloads with elevated permissions require compliance because their access can bypass controls, so container security best practices and stricter governance become mandatory.

Why is Kubernetes compliance important for modern containerized environments?

Kubernetes compliance is important for modern containerized environments because it turns fast-changing container orchestration into an auditable, enforceable security system that reduces exploitable gaps and proves security compliance over time.

In practice, Kubernetes compliance matters because it:

- Makes security controls enforceable in dynamic environments by codifying security measures as policies instead of manual configuration, which is essential when workloads scale and move automatically.

- Produces defensible audit evidence through kubernetes audit logs and a defined audit policy, so you can prove who changed what in a Kubernetes cluster and when.

- Reduces security vulnerabilities that originate from outdated images by requiring teams to scan container images for vulnerabilities and rebuild from patched base images, which is a container-specific risk pattern.

- Prevents silent drift in runtime behavior by prioritizing runtime security controls that detect and constrain unexpected activity in running containerized applications, not just at build time.

- Enables regulated workloads to meet explicit requirements such as pci compliance for payment card industry data security expectations, where auditability and control validation are mandatory.

- Aligns Kubernetes hardening to a recognized baseline like the Center for Internet Security Kubernetes benchmark, which translates “secure Kubernetes” into verifiable configuration checks.

- Strengthens protection for high-impact areas such as kubernetes secrets, access paths, and network boundaries by driving consistent kubernetes security best practices across clusters.

- Improves detection and response to security events by ensuring the system records and preserves evidence needed to investigate a potential security breach and to demonstrate accountability during audits.

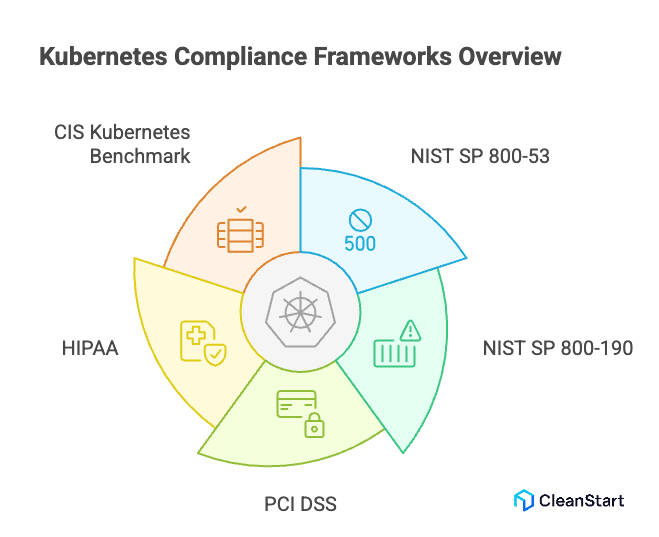

Which compliance frameworks apply to Kubernetes and container workloads?

The compliance frameworks that apply to Kubernetes and container workloads depend on what the workload does (for example, payment processing, healthcare data processing, SaaS hosting) and on how isolation is enforced at runtime, including cgroups resource-control boundaries that limit CPU and memory usage per container to prevent noisy-neighbor impact and reduce denial-of-service risk, but the most commonly applied frameworks fall into three groups.

Security control catalogs used to meet compliance requirements

- NIST SP 800-53 is used when compliance requires a formal catalog of security and privacy controls that organizations implement and audit across systems, including Kubernetes workloads.

- NIST SP 800-190 is used when compliance requires container-specific security practices across the container ecosystem, including build, deploy, and runtime risks.

Industry and regulatory compliance frameworks that drive Kubernetes compliance

- Card Industry Data Security Standard (PCI DSS) applies when containerized applications store, process, or transmit payment card data, and it defines baseline technical and operational requirements to protect that data.

- HIPAA compliance applies when containerized workloads handle protected health information and must demonstrate regulatory compliance through implemented security practices and auditability.

Secure configuration benchmarks used for compliance checks in Kubernetes clusters

- Center for Internet Security Kubernetes Benchmark is used to define prescriptive Kubernetes hardening checks that improve overall security and make compliance easier to demonstrate through repeatable configuration validation.

How do compliance controls map to Kubernetes security domains?

Compliance controls map to Kubernetes security domains by translating “what must be protected and proven” into enforceable technical boundaries inside a Kubernetes environment, starting at build time with a Dockerfile configuration blueprint that defines the container image contents, default user, and runtime entry behavior, then using native controls and automated verification to enforce those requirements in the cluster.

- Identity and access domain maps to controls that restrict who can act in the cluster, typically enforced through Kubernetes RBAC so only approved identities can create, update, or delete resources, which supports zero trust security.

- Network security domain maps to controls that limit east west and north south traffic, typically enforced through Kubernetes Network Policies so workloads can only communicate with explicitly allowed endpoints and ports.

- Workload and runtime domain maps to controls that reduce exploitation risk while containers run, using built-in security features and policy enforcement to ensure strong security settings are consistently applied across workloads.

- Supply chain and artifact domain maps to controls that govern what images are allowed to run, using an approved container registry and policy gates so only trusted, verified images enter the cluster, which reduces container security risk across the container ecosystem.

- Vulnerability management domain maps to controls that require container vulnerability scanning for images and workloads, so known security issues are detected, prioritized, and remediated as part of compliance across environments.

- Policy and configuration domain maps to controls that encode required behavior as security policies, then enforce security continuously so compliance checks are objective and repeatable, even when managing Kubernetes at scale.

- Automation and evidence domain maps to controls that automate compliance through recurring validation and reporting, using compliance tools and security tools to make compliance easier to demonstrate and reduce ongoing compliance concerns.

What technical Kubernetes controls are required for container compliance?

The technical Kubernetes controls required for container compliance are the enforceable mechanisms that translate compliance means into measurable, repeatable security outcomes across containerized workloads.

- Role-based access control for Kubernetes services is required to restrict who can interact with cluster resources, which is critical security for preventing unauthorized changes in a Kubernetes project, and it also limits who can modify a container entrypoint start-command definition that controls which process runs first inside the container and therefore governs the container’s runtime behavior.

- Policy-driven workload admission controls are required to block non-compliant workloads at deployment time, ensuring container security across environments rather than relying on manual reviews.

- Secure configuration of Kubernetes services and cluster components is required to reduce attack surface and ensure security is applied consistently, even as clusters scale or change.

- Automated security controls are required to continuously validate configurations and workload behavior, making compliance easier to demonstrate and reducing human error.

- Runtime enforcement controls specific to containers are required to detect and stop unexpected behavior after deployment, since many compliance failures occur post-launch.

- Centralized logging and evidence collection is required to support audits and prove that controls were active, enforced, and monitored over time.

- Integrated compliance tooling and security tools are required to operationalize a comprehensive container security strategy, especially where compliance can be challenging to maintain manually.

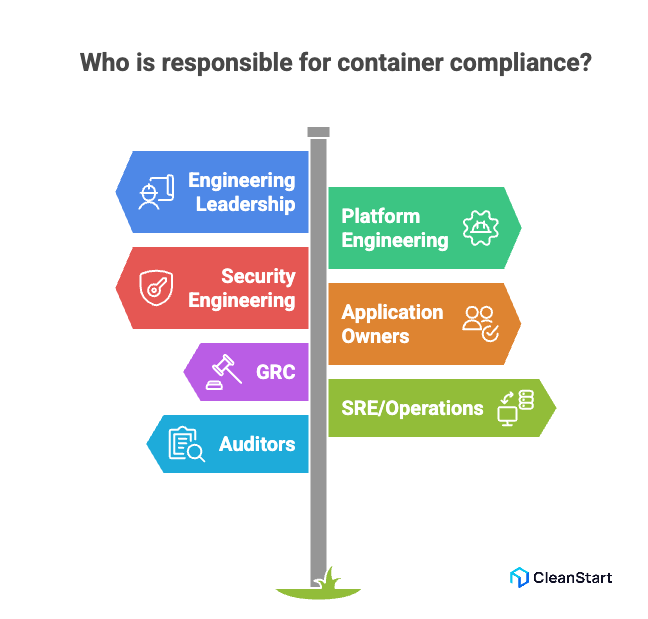

Who is responsible for container compliance in cloud native teams?

Container compliance in cloud native teams is a shared responsibility with clear ownership boundaries, because controls span build pipelines, Kubernetes operations, and audit evidence, and that division is what makes compliance easier to demonstrate.

- Engineering leadership (CTO, VP Engineering, Head of Platform) is responsible for defining the compliance scope, resourcing the program, and ensuring teams implement controls that align with recognized guidance such as the National Institute of Standards and Technology.

- Platform engineering (Kubernetes platform team) is responsible for cluster-level compliance controls, including baseline hardening, policy enforcement mechanisms, and standard guardrails applied across environments.

- Security engineering (AppSec, Cloud Security, DevSecOps) is responsible for translating requirements into technical controls, validating coverage, and setting security standards for what “compliant” means for containerized workloads, including enforcing an SBOM software bill of materials record that enumerates image components and dependencies so teams can verify provenance and trace vulnerable packages.

- Application owners (service teams) are responsible for workload-level compliance, including secure configuration, least-privilege access, and fixing non-compliant behaviors in the services they own.

- Compliance, risk, and governance (GRC) is responsible for interpreting regulatory expectations, maintaining control mappings, and ensuring evidence meets audit expectations so compliance is provable, not assumed.

- Site reliability engineering (SRE) and operations is responsible for operational controls such as monitoring, incident response readiness, and ensuring evidence remains available and accurate over time.

- Internal audit or external auditors are responsible for independent verification that controls exist, operate as stated, and produce evidence that supports compliance claims.

What common mistakes prevent effective container compliance?

Common mistakes that prevent effective container compliance include:

- Treating compliance as documentation only, which makes it harder to demonstrate compliance because controls are not enforced in the cloud container runtime.

- Using containers without defining compliance ownership, which causes control gaps because no team is accountable for important compliance outcomes.

- Allowing any image source, which increases risk because teams use container images from ungoverned sources and cannot prove what is running.

- Relying on manual checks instead of automation, which creates inconsistent results and makes it harder to demonstrate compliance at scale.

- Using security tools like scanners without remediation workflows, which leaves known issues unresolved and turns findings into permanent risk.

- Skipping policy enforcement at deploy time, which allows non-compliant workloads into production and forces reactive fixes later.

- Treating compliance as a one-time project, which fails because container environments change continuously and controls drift over time.

- Ignoring evidence collection, which fails audits because teams cannot produce verifiable records of control operation when asked.

FAQs

1. Does Kubernetes compliance require third-party security tools?

Kubernetes provides baseline controls, but most teams use external tools to automate evidence collection, runtime monitoring, and compliance checks at scale.

2. How often should container compliance be reviewed or reassessed?

Compliance should be continuously validated because Kubernetes environments change daily through deployments, scaling, and configuration updates.

3. Can container compliance be achieved without slowing down development teams?

Yes, when compliance controls are automated and embedded into CI/CD workflows instead of enforced through manual reviews.

4. Is container compliance the same across all cloud providers?

No, compliance requirements stay consistent, but implementation details vary based on each provider’s Kubernetes services and shared responsibility model.

5. What evidence is typically required to prove Kubernetes compliance?

Auditors usually expect configuration baselines, access control records, audit logs, vulnerability reports, and proof of enforcement over time.

6. Can small teams realistically maintain Kubernetes compliance?

Yes, if compliance is designed into the platform early and automated, small teams can maintain compliance with less overhead than traditional infrastructure.